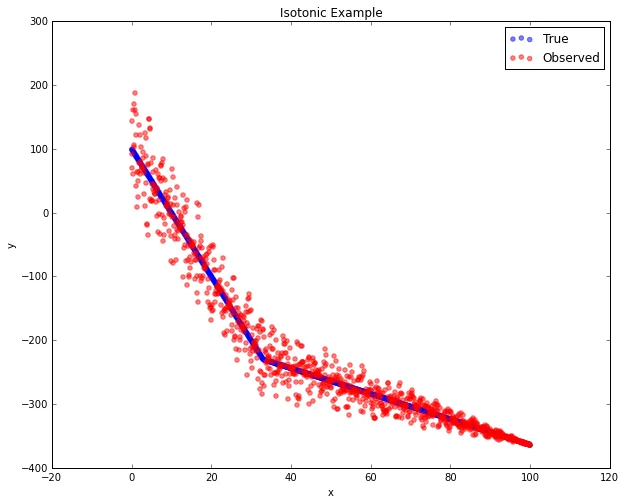

Isotonic regression is a great tool to keep in your repertoire; it's like weighted least-squares with a monotonicity constraint. Imagine that the true relationship between x and y is characterized piece-wise by a sharp decrease in y at low values of x, followed by a gradual decrease in y for larger x. There is also heteroskedasticity, with greater errors at low values of x. The key is that we believe y should be strictly non-increasing in x, i.e., monotonic.

Now imagine we want to produce a smoothed or denoised version of this relationship for visualization or to regularize data for further modeling. Common smoothing methods like polynomial splines, LO(W)ESS, or non-linear least squares won't obey our monotonicity assumption without additional work. Isotonic regression, on the other hand, is explicitly designed for this purpose.

In practical applications, we are probably trying to use observed values of y to predict some further z. We want to use past experience about x and y to help us better predict z. Isotonic regression handles this naturally while preserving the monotonic constraint.

Under the hood, this is handled in Python by scikit-learn's IsotonicRegression class. I recently pushed a few enhancements to the IsotonicRegression class: PR 3157 automatically determines whether y is increasing or decreasing in x based on the Spearman correlation coefficient; PR 3199 handles out-of-domain x values gracefully instead of throwing ValueError exceptions; and PR 3250 provides efficiency improvements by storing the interpolating function at fit time.

You can follow along with the Python code in the IPython notebook: nbviewer.ipython.org/urls/gist.githubusercontent.com/mjbo....